Deploy your side-projects at scale for basically nothing - Google Cloud Run

I have built hundreds of side projects over the years and finding a place to manage and deploy them all has always been tricky. From the early days of a GoDaddy hosting package with random PHP files in folders, through having a persistent DigitalOcean droplet running, and event running a bare minimum Kubernetes cluster on Google Kubernetes Engine (GKE) - I’ve never been satisfied with the outcome.

I have a few requirements when it comes down to deploying random side projects:

- Fully-managed - I don’t want to have to worry about servers anymore - it’s 2020 after all - and I want serverless all the things

- Cheap - These projects aren’t making me money so need to keep costs down

- Language agnostic - One day I maybe playing with something in Node, next Python, then Go.

- Scalable - in the unlikely something does takeoff, I don’t want to have to worry about it falling over

Thankfully, I’ve found a solution that I am happy with - Google Cloud Run.

What is Google Cloud Run?

Google is terrible at marketing…

Cloud Run is a fully managed compute platform that automatically scales your stateless containers. Cloud Run is serverless: it abstracts away all infrastructure management, so you can focus on what matters most—building great applications.

What it means is you can give it a docker container (technically any OCI compatible container) and it will deploy, run and scale it.

Why is it good?

If we evaluate this by my requirements:

Fully Managed

After using Cloud Run for over a year now I have never had to touch a server, VM, cluster or anything else. This is truly a deploy and forget service.

Due to it being fully managed there are a few requirements to make your application compatible. The only real one you have to worry about it to ensure your application runs an HTTP server and listens on the port set in the PORT environment variable that is present at runtime of your container.

Cheap

The beauty of Cloud Run is that it is only ‘running’ your container when it gets traffic. The pricing model is setup that you only pay for the CPU/memory/network bandwidth used when your app is getting requests.

It achieves this by deploying your app on demand when traffic hits the domain name they give you, it hangs around for a bit (undetermined) until after traffic stops, and the the app is torn down. The other way to look at this is autoscaling - when there is no traffic, it scales to 0.

There is a cost in the form of time - if you app takes time to ‘setup’ when it starts up, you will be making your users wait as Cloud Run scales up your application from 0 to 1+ instances. From my own use I’ve found this to be negligible though.

Due to this pricing model I’ve never paid more than a few cents - yes CENTS - a month for all my side projects (10+ deployed currently). This is a factor of the little traffic I get to them so you may need to do the maths for yours - the pricing page is here.

Language Agnostic

As Cloud Run takes any container image and deploys it, you can use any language you want. Be it Node, Go, Java, PHP or something entirely obscure, as long as it speaks HTTP and listens on the port defined in the PORT environment variable, Cloud Run doesn’t care what you do inside the container.

Scalable

I am yet to have a side project go ‘viral’ but I am confident that should such event occur, Cloud Run will handle it. The service will create more and more instances of your application up to the limit you defined (currently the cap is 1000 instances).

As long as you have architected your application to be stateless - storing data in something like a database (eg CloudSQL) or object storage (eg Cloud Storage) - then you are good to go. Do consider if any services you depend on can handle the traffic should this scenario occur though.

Simple Node.js Example

Enough chat, let’s write some code.

If we take the most basic example of a Node.js Express app serving some JSON - this could be an API server for your statically deployed React frontend example.

The Application

You have your usual Node application code - something like:

const express = require("express");

const app = express();

const port = process.env.PORT || 3000;

app.get("/", (req, res) => {

res.json({

message: "Hello World",

});

});

app.listen(port, () => console.log(`Example app listening on port ${port}!`));This is the bare-minimum to run an HTTP server in Node - in this case when you hit the root path, it returns a JSON with a “Hello World” message. The only ‘special’ bit in this is line 3 where we grab the port number we tell express to listen to from the environment variable if it exists - this will be provided by the Cloud Run service at execution time.

Next we need a Dockerfile to create our docker image from:

FROM node:10

# Create app directory

WORKDIR /usr/src/app

# Install app dependencies

COPY package*.json ./

RUN npm install

COPY . .

CMD ["node", "server.js"]This is a barebones Dockerfile which uses the node:10 base image, copies our package.json file in, installs our dependencies and then will run our server.js file upon running the container.

With that set now it is time to build & deploy.

Building & Pushing our Image

Google Cloud Run requires our images to be in the Google Container Registry of our project for easiest access. As such, you will need to have this enabled on your Google Cloud Project - you can find out how to do this here. You will also need the gcloud CLI installed on your machine and logged into your account - then run gcloud auth configure-docker to setup gcloud to work with docker.

Now to build, tag and push our image to the repository. Your container tag will need to be in the following format:

gcr.io/[gcp-project]/[app-name]:latest

Where [gcp-project] is your Google Cloud Project name eg my-project and [app-name] is the name of your container eg cloud-run-demo

You then build:

docker build -t gcr.io/[gcp-project]/[app-name]:latest .

and then push:

docker push gcr.io/[gcp-project]/[app-name]:latest

If everything worked without errors, you can now move onto deploying via Cloud Run.

Deploying on Cloud Run

You can do this via the gcloud CLI (using gcloud beta run ... commands or my preferred way, the Google Cloud Console.

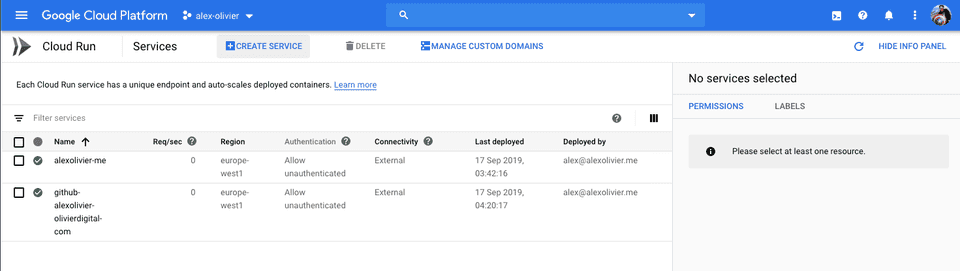

Start by going to the Cloud Run section of your Google Cloud Console:

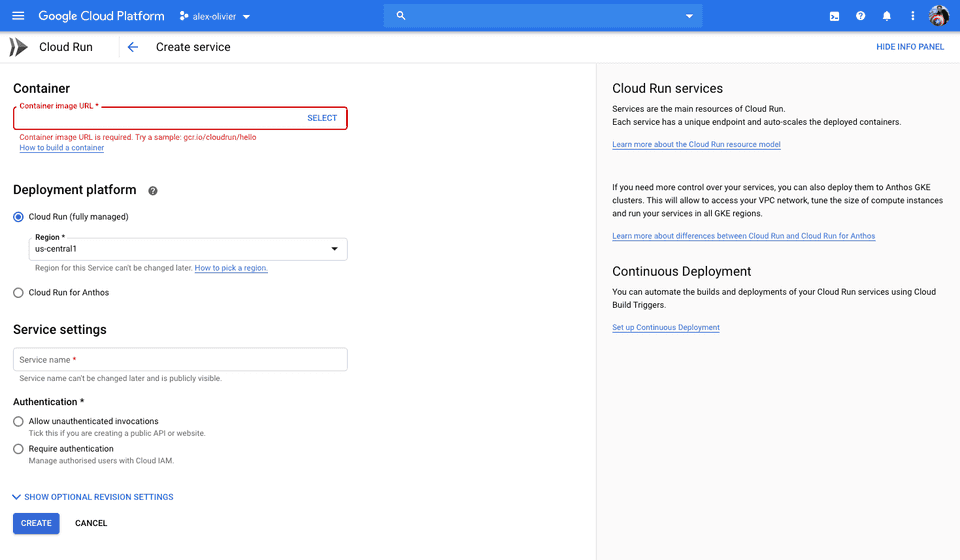

Next press the Create Service button at the top:

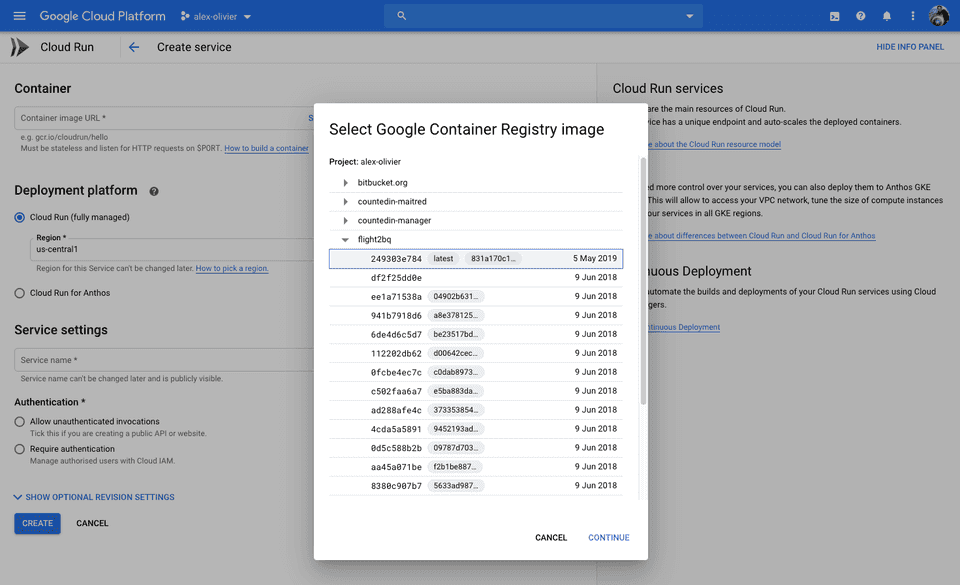

Here you need to select the container image that you just pushed - pressing the Select link in the box will open the picker which will show all the images in your Google Container Registry instance:

Once your image is selected a few other options to populate:

- Deployment Platform - for this example we want a fully managed offering so select

Cloud Runand your desired region. Go for the region closest to where your users will be. - Service Name - Give your service a name so you can look it up later

- Authentication - Decide if you want your service to be authentication via Cloud IAM or not. For all my projects, I want people to access them so check

Allow Unauthenticated Invocations

There are some more advanced options to set things like custom environment variables, scaling limits and memory caps, but the default is fine for most thing.

Hit Create and your service will be deployed. If you get any errors at this point it usually means your application isn’t listening on the PORT environment variable. Double check this by running the container image locally and passing in the variable.

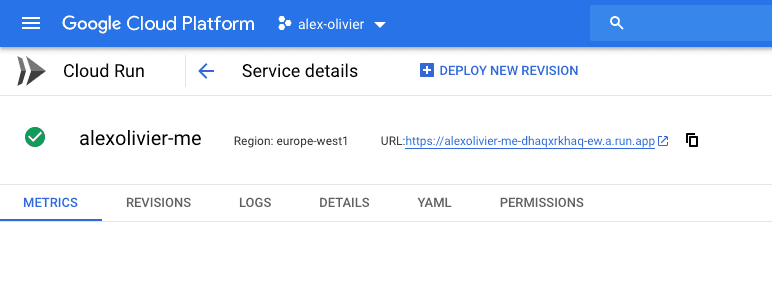

Behind the scenes Cloud Run is deploying your image and will given you an HTTP endpoint listed in the top of the page:

All the Cloud Run URLs end in run.app. Hitting this endpoint in your browser should return you the response from your server running in the container.

Your app is now fully deployed in a managed service. You can start throwing as much traffic at is as you wish, all without worrying about infrastructure, scaling, or maintenance and all for a very very small cost. The dream!

There are a number of advanced areas of Cloud Run which I make use of which maybe topics for future posts:

- Continuous Deployment from Cloud Build

- Domain Mapping for using my own domain names

- Connecting to CloudSQL for database storage

- Authentication using Cloud IAM

Conclusion

Hopefully you’ve got a better idea of what Cloud Run is, why it is so powerful and how it can help you with deploying all sorts of crazy side-projects for very little money and hassle.

Hit me up on Twitter @alexolivier if you have any questions or want to talk more.